May 8, 2026

Ranking Beyond Binary Relevance: mxbai-rerank-v3-listwise

15 min read

May 8, 2026

Mixedbread Team

When we released Wholembed v3, the retrieval quality bar moved up sharply enough that most rerankers stopped helping. Pointwise rerankers, which score documents independently and sort by score, struggled to add value on top of strong first-stage results. On harder corpora, they actively hurt them.

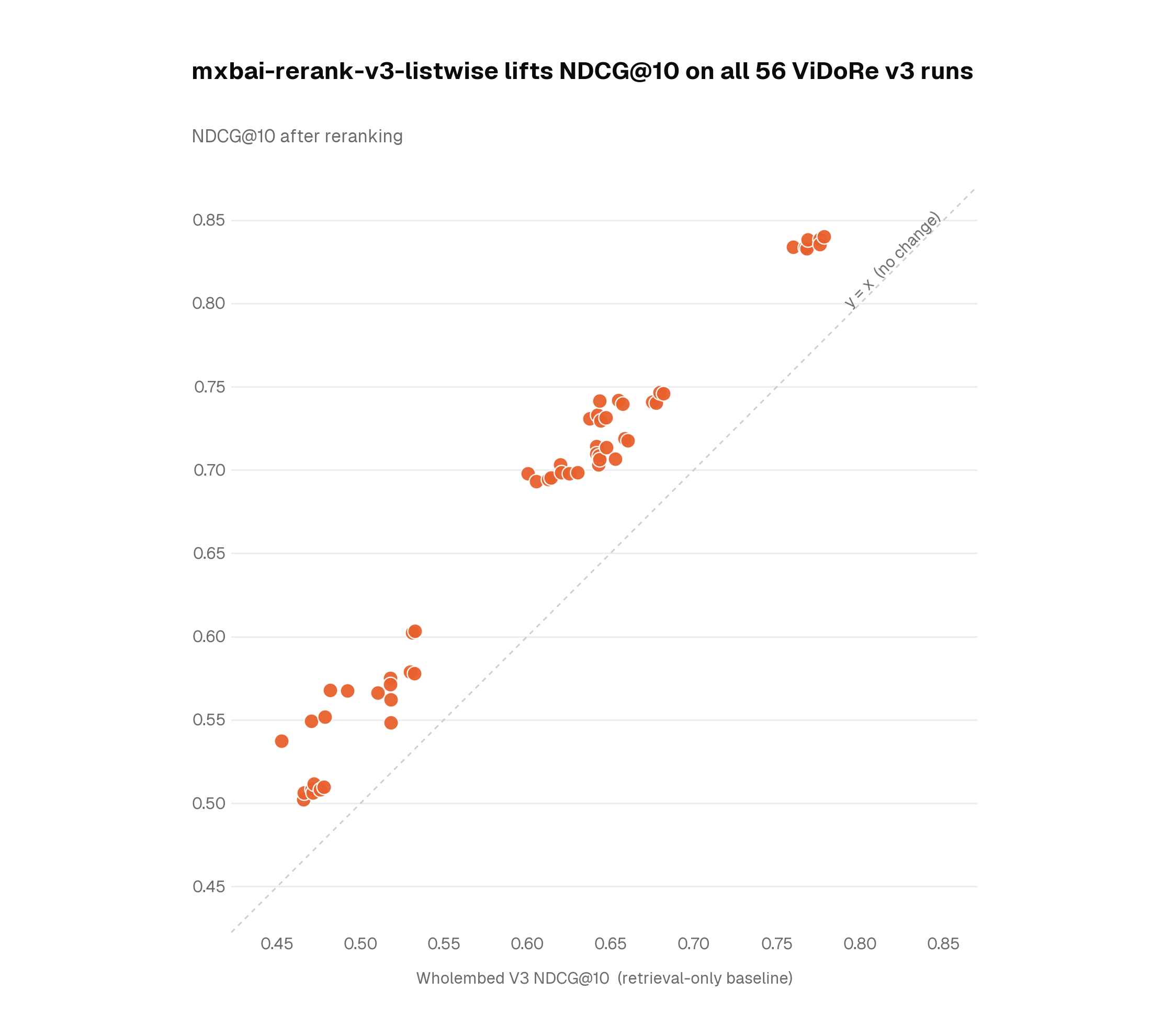

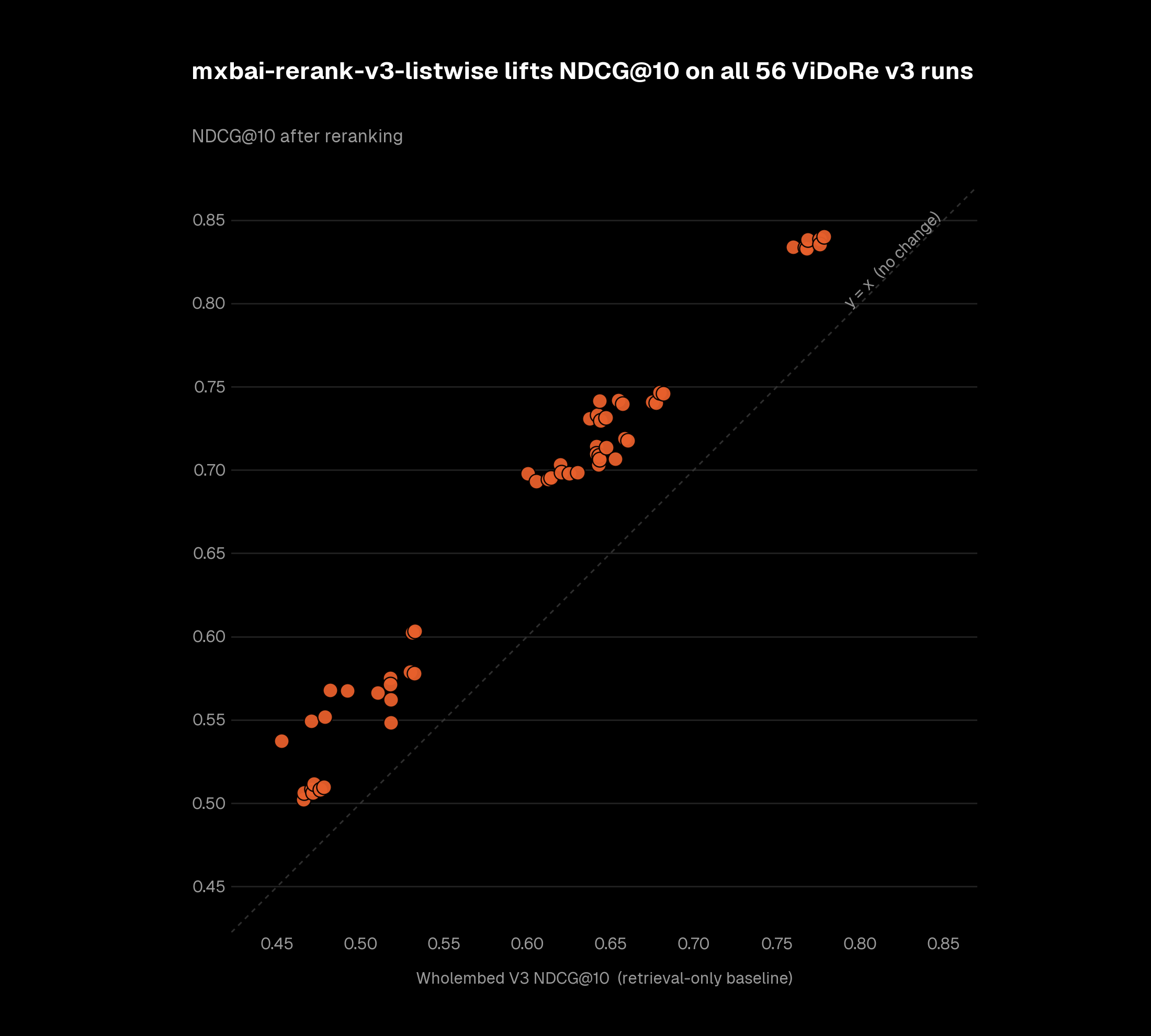

Today we are releasing mxbai-rerank-v3-listwise, a listwise reranking model co-designed with Wholembed v3. It is the first reranker we have shipped that improves results on every domain, language, and benchmark we tested — lifting all 56 Vidore v3 runs, +11% NDCG@10 on average. It also brings strong instruction-following capabilities. You can steer ranking with natural-language directives, like prioritizing recent documents, preferring internal sources, or resolving conflicts between knowledge bases.

mxbai-rerank-v3-listwise is available in preview today as part of Mixedbread Search.

Better Reranking for Stronger RetrievalLink to section

On ViDoRe v3, a benchmark for real-world document retrieval, Wholembed v3 alone averaged 0.603 NDCG@10.1 Adding mxbai-rerank-v3-listwise lifted every one of the 56 runs, with an average gain of 11%.

| Setting | NDCG@10 (avg, 56 runs) | Δ vs. retrieval-only |

|---|---|---|

| Wholembed v3 only | 0.603 | - |

| + mxbai-rerank-v3-listwise | 0.669 | +10.92% |

The biggest gains came on the harder, lower-baseline subsets: industrial documents in German went up 18.8%, HR in French 16.3%. These are the kinds of corpora where small ranking errors compound into worse downstream answers.

Comparative Ranking with Instruction FollowingLink to section

mxbai-rerank-v3-listwise is a listwise reranker. Unlike pointwise rerankers, which focus on the relevance of individual documents, it reads the candidate set as a whole and can resolve conflicts between candidates.

With strong instruction-following capabilities, you can tell it to prefer newer documents, prioritize internal sources over external summaries, or favor primary sources over commentary.

A booking confirmation may be superseded by a later cancellation. A product spec may override a launch note. A financial filing may be more authoritative than a same-day article.

We benchmarked mxbai-rerank-v3-listwise against the strongest pointwise rerankers available on a 900-example instruction-following evaluation covering recency-aware ranking, source-priority resolution, and multi-step composite instructions.2

| Reranker | MRR | Accuracy@1 |

|---|---|---|

| mxbai-rerank-v3-listwise | 0.93 | 88.6% |

| Voyage rerank-2.5 | 0.84 | 77.4% |

| Cohere Rerank 4 Pro | 0.77 | 68.4% |

| ZeroEntropy zerank-2 | 0.71 | 60.3% |

Much of the gap comes from recency-aware ranking, where the model has to understand that a March schedule change supersedes a January booking confirmation, or that Q1 FY27 guidance supersedes Q4 FY26 guidance.

How Listwise Changes the AnswerLink to section

To make the difference concrete, here are five cases where pointwise and listwise reranking diverge under an explicit instruction.

The Code of Conduct is keyword-dense for "indemnification" but belongs to a different agreement; Amendment 4 is the most recent document but doesn't touch §12. Listwise traces the §12 chain (base → scope rewrite → cap reset) to identify Amendment 3 as the operative cap, sitting on top of Amendment 2's scope.

One APILink to section

mxbai-rerank-v3-listwise is available in preview today through Mixedbread Search:

from mixedbread import Mixedbread

client = Mixedbread()

results = client.stores.search(

store_identifiers=["my-store"],

query="when is my flight to London? The most recent valid booking wins",

search_options={

"rerank": {

"model": "mixedbread-ai/mxbai-rerank-v3-listwise",

}

},

)New users get $5 free credits to try it.

If this is a problem you want to work on, we are hiring.

FootnotesLink to section

-

We use ViDoRe v3 because it is a widely used benchmark for real-world document retrieval over complex corpora, with multilingual and domain-specific subsets. The benchmark covers seven domains (computer science, energy, finance, HR, industrial, pharmaceuticals, physics) across seven languages, for 56 paired runs in total. ↩

-

The evaluation is derived from real user corpora and search patterns, then converted into controlled ranking tasks. Examples are designed to test whether the reranker can resolve conflicts inside a candidate set, not just match query-document relevance. ↩

15 min read

May 8, 2026

Mixedbread Team